How I Completed a 146-Question Analyst RFI in 24 Hours

Agentic PMM for Analyst Relations

Most conversations about AI in marketing focus on content generation. Writing blog posts. Generating social copy. Creating ad variations.

That is the least interesting application.

The real opportunity is in complex, multi-stakeholder projects where the primary challenge is not writing but coordination, consistency, and context management. Analyst relations is a textbook example.

At my last company, I led the submission for a major industry analyst evaluation. It required a tiger team of 25-30 people working long hours for two weeks. I flew to Seattle to sit with the core team in person. Product, engineering, finance, CS, legal, sales ops. Everyone had their sections. A project manager kept it all on track. It was a massive, coordinated effort.

This year, I did the same type of submission. 146 questions across 13 sections. Financial data, product capabilities, competitive positioning, customer metrics, pricing strategy, innovation roadmap. The full scope.

My team: Claude Code and me.

Total time: about 24 working hours.

A typical analyst RFI involves:

Hundreds of questions spanning product, finance, legal, customer success, and competitive positioning

Input from many stakeholders across the company

Strict formatting and character limit requirements

Cross-referencing across sections to ensure consistency

Strategic judgment calls on positioning, data disclosure, and framing

A hard deadline with no extensions

This is exactly the kind of work where agents excel. Not because they are creative. Because they are systematic.

The Framework

After running a full analyst RFI with AI agents, here is the framework I would use again. This is Agentic PMM in practice.

Step 1: Build the Context Layer

Before an agent writes a single word, feed it everything:

Prior-year submissions (baseline, not blank page)

Analyst briefing and inquiry notes

Product documentation and roadmap

Financial metrics and customer data

Competitive intelligence

Internal messaging and positioning frameworks

But loading documents into folders is not enough. The game changer was creating markdown files that explained what each document is, why it matters, and how the agent should use it. A source priority hierarchy. Instructions on which documents to trust when sources conflict.

I built custom agent skills. Context that taught Claude Code how to interpret analyst evaluation criteria, how to weight different source types, and how to distinguish between what is GA today versus what is on the roadmap. I wrote a detailed spec that enforced specific terminology (the exact product names to use, phrases to avoid), set character limits per question, and defined tagging conventions for missing data.

That upfront investment in the spec and context architecture was the most important work of the entire project. It turned a general-purpose LLM into a purpose-built analyst relations machine.

And here is the thing: I will not have to do most of that work again. The spec, the skills, the source hierarchy. It carries forward. Next year, the submission gets even faster.

The depth of context determines output quality. The specificity of instruction determines whether that output is generic or sharp. This is not “give the AI your docs and hope for the best.” This is building a knowledge base with reasoning instructions that teach the agent how to think about the material.

Step 2: Map Questions to Sources

Have the agent read every question and identify:

Which questions can be answered from existing materials

Which questions need updated data (and from whom)

Which questions require strategic decisions from leadership

This produces two things: a draft readiness score and a data request list with specific names attached to specific gaps.

But the real power is in how you ask for the data. The agent does not just flag “need financial data from finance.” It identifies the exact data point needed, frames the request so the stakeholder knows precisely what to provide, and removes much of the guesswork. No back and forth. No “can you clarify what you need?” They get a clear ask, they provide the answer, and they can see in real time how their input updates the response.

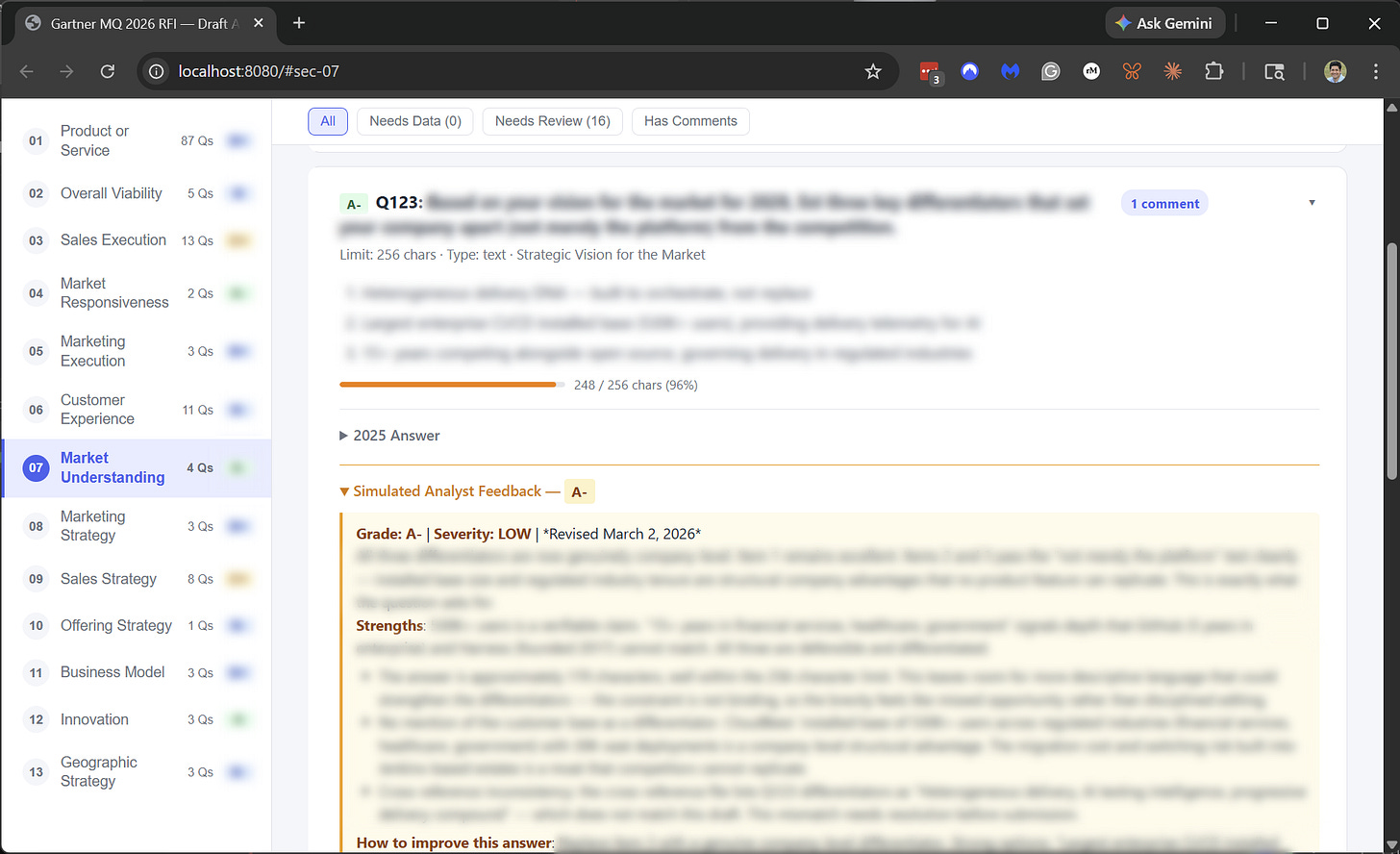

I vibe-coded a web application for this. A single-page app and Supabase where every stakeholder could see the full set of answers, read the simulated analyst feedback alongside each response, and provide their input. No meetings. No email chains. Everyone in one place.

That feedback loop completely changes the dynamic. Stakeholders are not filling out a spreadsheet and hoping it ends up in the right place. They can see the impact of their contribution immediately. It makes the whole process faster and dramatically reduces friction.

Step 3: Draft with Constraints

Set explicit rules before drafting:

Terminology enforcement (product names, positioning language)

Tone guidelines (no superlatives, evidence-based only)

Character limits per question

What counts as GA vs. planned

Which claims need citations

The agent drafts all answers in one pass, applying constraints uniformly. A human team working across sections will inevitably have different interpretations of these rules. The agent does not.

But the agent is not doing the thinking. It is handling the pattern matching and the grunt work. I still had to bring the pieces together. The strategic framing, the positioning choices, and the judgment on how to present a nuanced story. That is human work.

What changed is the speed of iteration. In past submissions, I would read every answer in the full document and manually adjust the positioning so the narrative flowed naturally across sections. That editing pass alone could take days. With agents, I could describe the adjustment I wanted, and it would be applied immediately to every relevant answer. The same work that used to take days happened in about an hour. My judgment still drove every decision. The agent just made it possible to act on that judgment at scale.

One of the things that changed my mental model: Claude Code ran autonomously overnight. I would set it on a long job and go to sleep. I woke up to completed work. That is not how traditional project management works. You do not assign a task at 11 PM and get it back at 6 AM. But with agents, the clock is not a constraint. The project moved forward while I was sleeping.

Step 4: Cross-Reference Everything

This is the step most teams skip or do poorly. Every answer must be consistent with every other answer.

Revenue figures

Customer counts

Capability claims

Competitive framing

Pricing logic

Deployment model descriptions

The agent checks every answer against every other answer and flags contradictions. In a 146-question RFI, this is not optional. It is the difference between looking polished and looking disorganized.

Here is why this matters so much: analysts read the entire submission. They are looking for the story across all 13 sections, not evaluating each answer in isolation. If you claim 500 customers in Section 2 and reference “nearly 600” in a case study summary in Section 8, that inconsistency registers. If your competitive positioning in the product section emphasizes one differentiator but your innovation section tells a different story, the analyst notices. These are the kinds of errors that erode credibility, and in a traditional multi-author process, they are almost impossible to catch completely.

No single person on a traditional team can hold 146 answers in their head simultaneously. The agent can. When I was reviewing Section 9 on pricing, the agent could instantly check whether my framing was consistent with what I said in Sections 1 and 3. That cross-referencing at scale is something humans cannot replicate, no matter how good the team is.

When I ran this process with a 25-person team at my previous company, the consistency review was its own workstream. Someone had to read the entire document end-to-end multiple times, cross-checking numbers, language, and claims. It was tedious, error-prone, and always time-pressured because it happened at the end when the deadline was already breathing down your neck.

With agents, cross-referencing happens continuously. Not as a final pass. Not as a last-minute scramble. The agent holds every answer in context simultaneously and catches contradictions as they are introduced, not after they have been baked into the final draft for a week. That single capability alone justifies the entire approach.

Step 5: Build a Quality Gate with a Simulated Analyst

This was the move I did not expect to matter as much as it did.

I built a simulated analyst agent that reviewed every answer the way a real industry analyst would. It looked for unsupported claims, vague language, missing quantification, positioning that did not differentiate, and answers that contradicted other answers in the submission.

The feedback was sharp. Genuinely sharp. It caught things like: “You claim this capability is differentiated, but you have not explained what competitors lack.” Or: “This revenue growth narrative does not address the churn question the analyst will ask in follow-up.”

This quality gate meant that by the time I reviewed the answers, the obvious problems had already been flagged. I could focus on the strategic decisions instead of catching basic issues.

Step 6: Separate Decisions from Drafting

Create a clear list of every strategic decision the agent cannot make:

Should we disclose this metric?

How do we frame this gap?

Which competitive story do we lead with?

How aggressive is our roadmap commitment?

Present each decision with context, options, and tradeoffs. The decision-maker reviews 20 judgment calls instead of 146 full answers. That is a fundamentally different use of their time.

This is the step that changes what it means to be the person leading the submission. In the traditional model, the AR lead is drowning in logistics. Chasing contributors. Reformatting answers. Reconciling conflicting inputs from six different teams. By the time you get to the strategic decisions, you are exhausted, and the deadline is tomorrow. You make those calls with whatever mental energy you have left.

When the agent handles drafting, cross-referencing, and consistency, the strategic decisions surface cleanly. You are not hunting for them buried inside 80 pages of text. They are presented to you: here is the question, here is what we said last year, here is what the data supports, here are three options for how to frame it, and here is what the simulated analyst would push back on for each option.

That reframing is the whole point of Agentic PMM. The senior person’s time goes to the work that actually moves the dot on the quadrant. Not formatting. Not chasing stakeholders. Not reconciling inconsistencies. The 20 decisions that require real judgment get 100% of your attention instead of competing with the rest of the answers that just need someone to type them up.

The output is better because the decision-maker is sharper. They are not fatigued from the grind. They are fresh and focused on the choices that matter.

Step 7: Iterate on Quality

Because drafting is fast, you get multiple review passes:

Terminology and consistency sweep

Strategic positioning review

Data accuracy verification

Final tone and formatting pass

In the traditional model, you are lucky to get one full review before the deadline. The submission goes out, and you know there are things you would have caught with one more day. But the deadline does not care.

With agents, the economics of iteration flip completely. A full review pass that would take a human at least a day takes 45 minutes. You find something in Section 11 that changes how you want to frame the answer in Section 3. In the traditional model, that is a painful rework. With an agent, you describe the change, and it immediately propagates across every affected answer.

I ran three full review passes on this submission. Each one improved the quality materially. The first pass caught structural issues and positioning gaps. The second tightened the narrative and strengthened the competitive framing. The third was a final polish on tone and specificity, and on ensuring every claim was backed by evidence.

Three passes. On a 146-question submission. Before the deadline. In a traditional process, that would have required an extra week that the team did not have.

This is the part people underestimate about working with agents. Speed is not just about finishing faster. It is about having time to make the work better. When the grind takes 70% of your timeline, quality gets whatever is left over. When the grind is handled, quality gets the full runway.

A Note on Model Agnosticism

Mid-project, Opus went down. Not ideal when you are on a deadline.

I switched to GPT 5.2 and kept moving. The work continued. The quality held. When Opus came back online, I had it review the GPT 5.2 work and smooth out any stylistic inconsistencies.

This taught me something important: you cannot build a workflow that depends on a single model. Model agnosticism is not a nice-to-have. It is a survival skill. The best Agentic PMM workflows are designed so you can swap the underlying model without losing momentum.

What This Changes

The traditional analyst relations playbook assumes that the hard part is production. Gathering data, writing answers, coordinating reviewers, and managing timelines.

With agents, the hard part shifts to judgment. What to say, what to hold back, how to frame your narrative. That is what the analyst is actually evaluating. Not your ability to fill out a form. Your ability to articulate a coherent strategy.

When you remove the production bottleneck, the quality of your strategic thinking becomes the differentiator. That is where product marketers should be spending their time anyway.

That is Agentic PMM. Not AI writing for you. AI that gives you the infrastructure to think at scale.